Abstract

Recent advances in 3D scene editing using NeRF and 3DGS enable high-quality static scene editing. In contrast, dynamic scene editing remains challenging, as methods that directly extend 2D diffusion models to 4D often produce motion artifacts, temporal flickering, and inconsistent style propagation. We introduce Catalyst4D, a framework that transfers high-quality 3D edits to dynamic 4D Gaussian scenes while maintaining spatial and temporal coherence. At its core, Anchor-based Motion Guidance (AMG) builds a set of structurally stable and spatially representative anchors from both original and edited Gaussians. These anchors serve as robust region-level references, and their correspondences are established via optimal transport to enable consistent deformation propagation without cross-region interference or motion drift. Complementarily, Color Uncertainty-guided Appearance Refinement (CUAR) preserves temporal appearance consistency by estimating per-Gaussian color uncertainty and selectively refining regions prone to occlusion-induced artifacts. Extensive experiments demonstrate that Catalyst4D achieves temporally stable, high-fidelity dynamic scene editing and outperforms existing methods in both visual quality and motion coherence.

Method

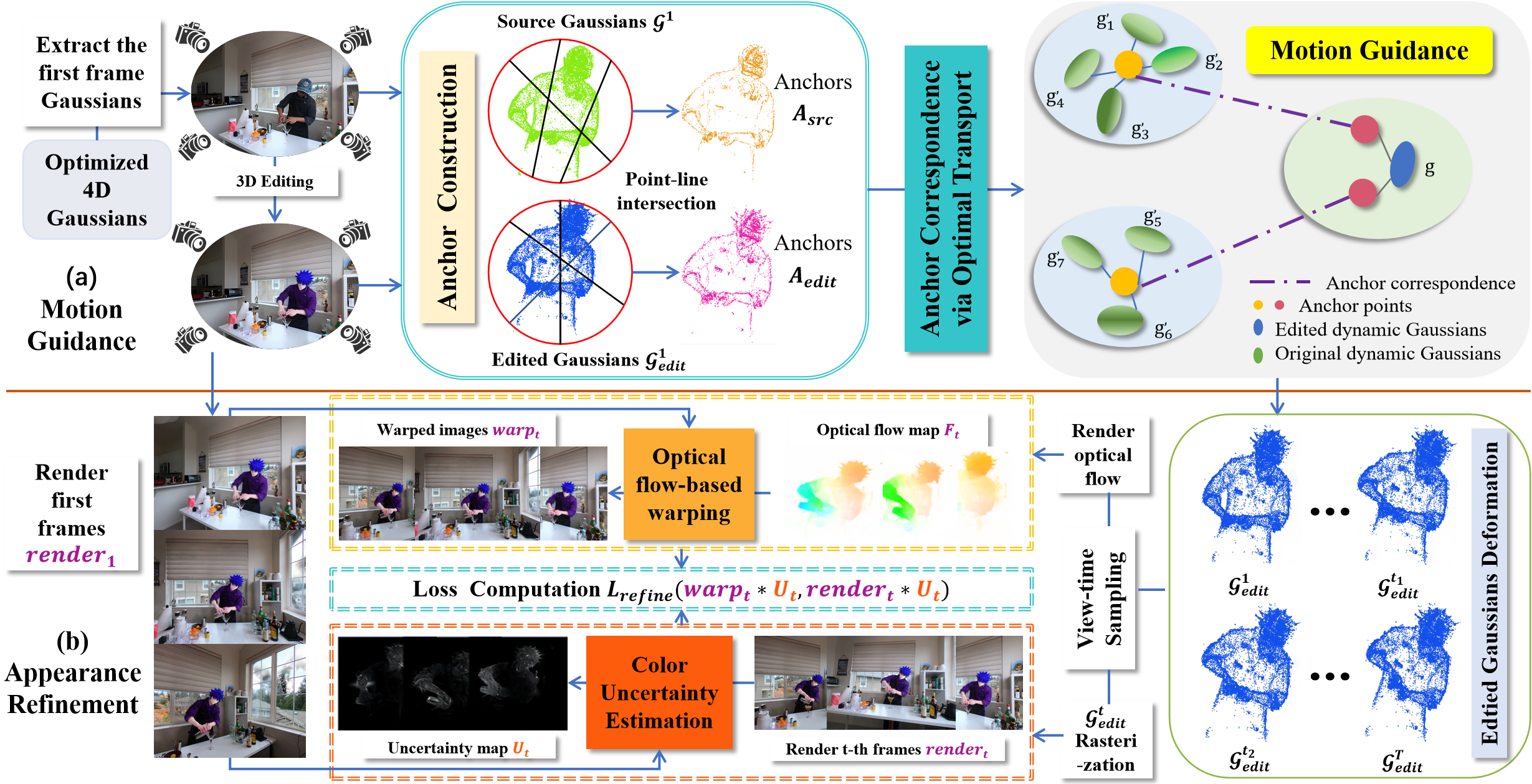

Overview of Catalyst4D. Given the first-frame edited dynamic Gaussians, our (a) Anchor-based Motion Guidance establishes region-level correspondences with the original Gaussians via anchor construction and optimal transport, enabling reliable deformation transfer. Then, (b) Color Uncertainty-guided Appearance Refinement leverages first-frame warping and Gaussian color consistency to identify and correct motion-induced artifacts across time.

Results

Qualitative Comparison

Sear_steak : Turn him into a Minecraft character

Ours

Instruct4D-to-4D

Instruct4DGS

CTRL-D

Coffee_martini : Turn his hat into a newsboy cap

Ours

Instruct4D-to-4D

Instruct4DGS

CTRL-D

Cut_roasted_beef : Turn his clothes into a football player outfit

Ours

Instruct4D-to-4D

Instruct4DGS

CTRL-D

Trimming : Change the leaves of the plants to autumn leaves

Ours

Instruct4D-to-4D

Instruct4DGS

CTRL-D

Discussion : Make the people look like Marble roman sculptures

Ours

Instruct4D-to-4D

Instruct4DGS

CTRL-D

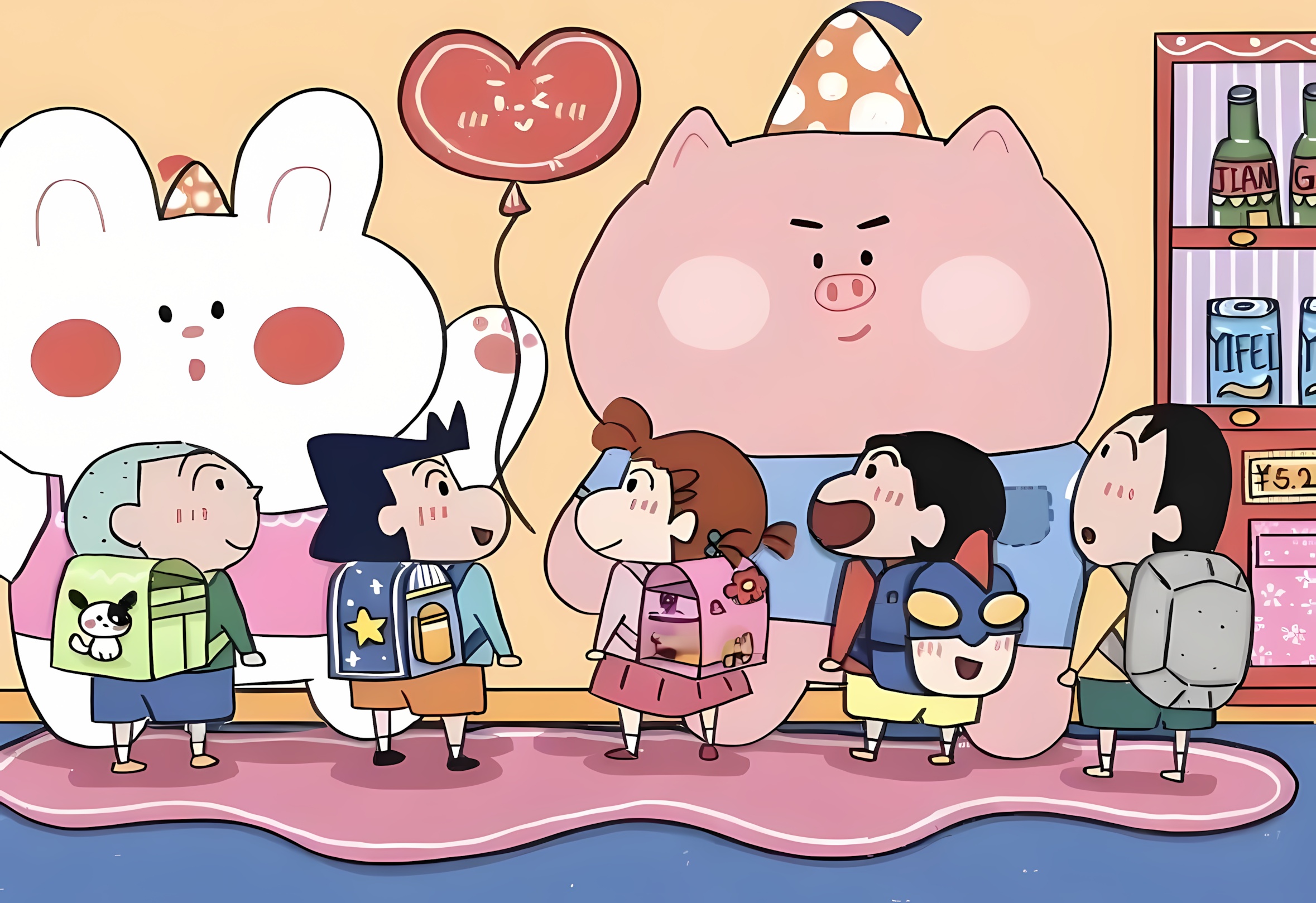

Global Style Transfer

Coffee_martini

Src

Sea_coffee

Cartoon_coffee

Autumn_coffee

Sear_steak

Src

Cat_sear

Dog_sear

Cartoon_sear

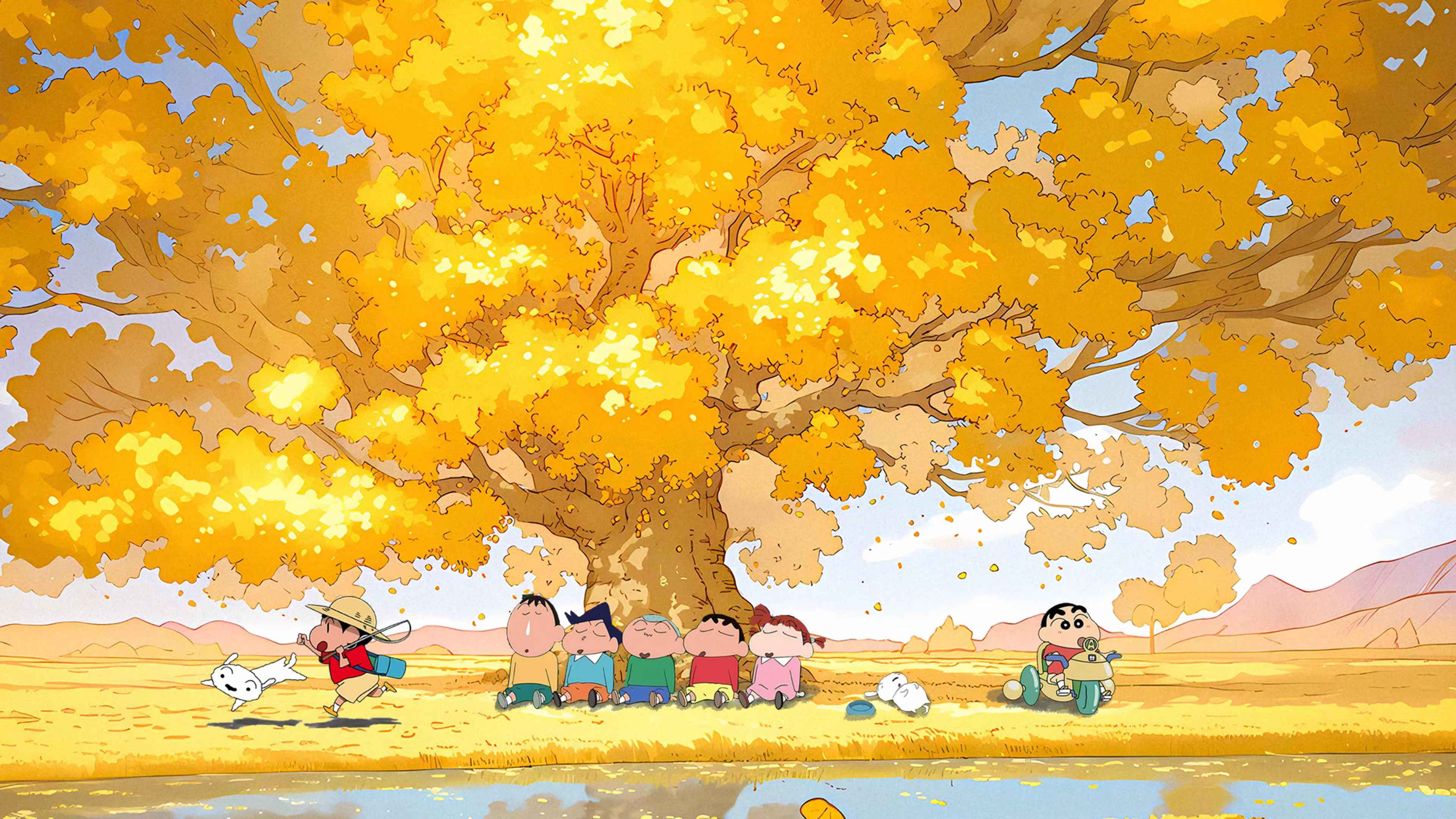

Qualitative Results with Varying Camera Poses

Sear_steak

Original Scene

Turn him into a Minecraft character

Make him wear shoulder armor

Coffee martini

Original Scene

Turn his hat into a newsboy cap

Make him wear a suit

HyperNeRF

Original Scene

Make the torch carved from a flawless emerald

Turn the torch into Pop art style

Original Scene

Turn the extruder into coral style

Make the extruder covered in glacial ice